Can Decentralized Content Moderation Tools Re-Balance Governance of Online Speech?

The Future of Free Speech has launched a new prototype to test this idea.

Content moderation has become one of the central governance questions of the digital age. Decisions about whether to remove online speech determine the contours of public discourse. Despite growing regulatory intervention, particularly in the European Union, dissatisfaction with the current model persists.

Concerns about the over-removal of lawful speech, a lack of contextual sensitivity, and the concentration of power in private platforms remain unresolved. The challenge is not how to moderate content effectively, but how to do so in a way that is legitimate, proportionate, and compatible with freedom of expression.

In partnership with Analysis & Numbers, The Future of Free Speech has launched a new distributed content-moderation prototype that offers a concrete alternative. Rather than reinforcing centralized moderation, it redistributes decision-making power to users and communities.

The key rationale for the prototype’s development is that such distributed moderation models directly address structural flaws in both platform governance and regulatory approaches and may represent a necessary evolution in content moderation design.

To try the prototype, visit the link below and press “continue to demo” on the home page.

The Structural Limits of Centralized Moderation

Content moderation has been defined as the process by which platforms screen, evaluate, categorize, approve, or remove user-generated content in accordance with relevant policies. Centralized moderation operates through standardization. Platforms apply uniform rules across diverse contexts, often relying on automated systems to manage scale. This produces efficiency but at the cost of nuance.

Moderation decisions are shaped not only by legal requirements but by platform incentives, risk management strategies, and internal governance structures. The European Union’s Digital Services Act (DSA) represents the most comprehensive attempt to regulate content moderation. It imposes obligations on platforms to remove illegal content, implement notice-and-action mechanisms, and ensure transparency and accountability.

However, the DSA operates within, and arguably reinforces, the centralized model it seeks to regulate. It assumes identifiable intermediaries capable of exercising control over content and enforcing rules across their systems. This creates a regulatory paradox. While the DSA aims to protect fundamental rights, including freedom of expression, it simultaneously incentivizes platforms to adopt more restrictive moderation practices.

One consequence of this regulatory framework is systematic over-removal. Platforms are incentivized to err on the side of caution, particularly where regulatory or reputational risks are high. In a 2024 study, we found that a substantial majority (87.5% to 99.7%) of deleted comments on Facebook and YouTube in France, Germany, and Sweden were legally permissible, suggesting that platforms, pages, or channels may be over-removing content to avoid regulatory penalties. This could reflect how regulatory pressure can exacerbate this tendency, encouraging platforms to remove lawful but controversial content in order to avoid liability or public backlash.

Automation intensifies this dynamic. AI-based moderation systems, while necessary at scale, struggle with context, irony, and cultural nuance. Empirical research has shown that such systems can produce both false positives (over-removal) and false negatives (under-enforcement), raising concerns about both accuracy and fairness. The result is a system in which speech is filtered not only by law but also by platform risk calculations embedded in algorithmic design.

Moreover, regulation struggles to keep pace with technological change. Emerging environments such as metaverses challenge the assumption that content can be centrally controlled and removed.

Decentralization and the Crisis of Control

The rise of decentralized technologies introduces a fundamental shift in the structure of online systems. In decentralized networks, data is distributed across multiple nodes, with no single entity exercising full control. This has significant implications for content moderation.

Traditional models rely on central intermediaries to detect, evaluate, and remove content. In decentralized systems, these functions become fragmented or absent. Decentralization can enhance privacy, security, and resistance to censorship. At the same time, it creates new challenges for governance. Without central control, enforcing rules becomes difficult, and harmful content may persist.

Against this backdrop, distributed moderation offers a different paradigm. Rather than concentrating power in platforms or relying solely on regulation, it redistributes decision-making to users and communities.

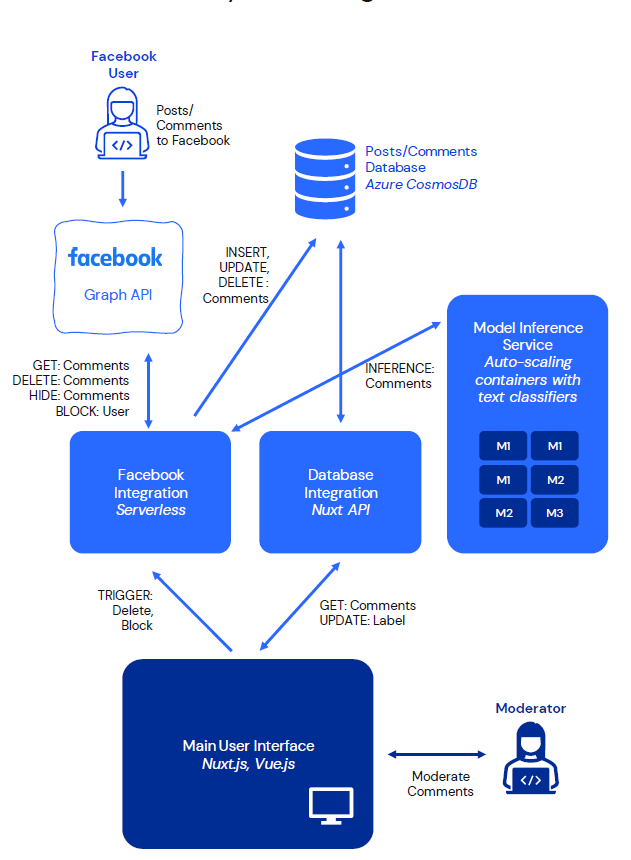

The prototype provides a practical example of this approach, integrating AI classification with user-defined moderation thresholds, enabling context-sensitive decision-making. The system operates through a structured pipeline: comments are collected via platform APIs, analyzed by machine-learning models, stored in a database, and presented to moderators via a prioritized interface. Moderators can then take actions such as hiding, deleting, or blocking content.

Crucially, the system does not automate final decisions. AI is used to assist, not replace, human judgment. This hybrid model addresses the limitations of both purely automated and purely manual moderation. Normatively, the system embodies a shift from standardization to pluralism. Different communities can adopt different thresholds for what constitutes harmful content, reflecting their specific contexts and values.

The Pros and Cons

Distributed moderation directly addresses the structural problems of centralization. First, it reduces over-removal by allowing communities to calibrate their own thresholds. This counters the precautionary bias inherent in centralized systems and aligns moderation more closely with legal standards of permissible speech.

Second, it enhances contextual sensitivity. Community moderators are better positioned to understand the nuances of language, culture, and interaction within their spaces. This improves both accuracy and legitimacy.

Third, it restores user agency. As noted in research on decentralized governance, empowering users can “democratize the process” of content regulation and reduce reliance on opaque platform decisions. These features are particularly important given concerns about the concentration of power in digital platforms. By redistributing moderation authority, distributed systems can mitigate the risks associated with centralized control. At the same time, the model avoids the pitfalls of full decentralization. By retaining a structured interface, AI support, and integration with existing platforms, it ensures that moderation remains feasible and effective.

However, distributed moderation is not a panacea. It introduces new challenges, particularly regarding consistency, accountability, and potential fragmentation. Different communities may adopt divergent standards, raising questions about equality and fairness. There is also a risk that some communities may tolerate harmful content, particularly in the absence of strong oversight.

Moreover, empowering users requires capacity. Effective moderation depends not only on tools but on training, resources, and institutional support. Without these, distributed systems may struggle to achieve their full potential.

There are also legal questions. Existing regulatory frameworks are designed for centralized actors, and adapting them to distributed models will require careful consideration. Nevertheless, these challenges are not unique to distributed systems. They reflect broader tensions in content moderation between centralization and pluralism, efficiency and legitimacy, safety and freedom.

Can Decentralization Be The Future?

The current model of content moderation is reaching its limits. Centralized systems struggle with context, scale, and legitimacy, while regulatory frameworks risk reinforcing the very dynamics they seek to correct. Distributed moderation offers a promising alternative that could rebalance the relationship between platforms, users, and regulators.

The prototype discussed in this article demonstrates that such models are not merely theoretical. They can be built, tested, and deployed. The question is not whether distributed moderation will replace centralized systems entirely. It is whether it can complement and reshape them in ways that better align with democratic values and fundamental rights.

As online environments continue to evolve, particularly with the rise of decentralized technologies and immersive platforms, the need for such rethinking will only become more urgent.

Natalie Alkiviadou is a Senior Research Fellow at The Future of Free Speech. Her research interests lie in the freedom of expression, the far-right, hate speech, hate crime, and non-discrimination.